Although the implementation and sustainment problems I discussed in my last posting certainly impede progress or limit profitability, in my view there is another, more compelling reason why improvement initiatives have failed in many companies. Whether you are using Lean or Six Sigma or Lean Six Sigma as your primary improvement tactic, there still seems to be something missing that is limiting your company’s ability to make money now and in the future. I want to state up front that this missing link is not the methodologies (i.e. Lean, Six Sigma or Lean Six Sigma) themselves because we all know that processes are full of waste and variation that must be reduced or removed. So what is missing?

or

My motivation for starting this blog in 2010 was to share the benefit of my experience and my own lessons learned in the 40 plus years I’ve been engaged in improving companies. I wanted to help businesses flourish and become more profitable. Because I had been successful in helping businesses improve their bottom line, I felt almost duty-bound to share what I had discovered so many years ago. What I’ve learned along the way is that if Lean, Six Sigma, Lean-Six Sigma or some other improvement methodology are to be successfully deployed, knowing where to focus your efforts is the key to making money. I named my blog site Focus and Leverage for a reason. I wanted to help companies answer the three basic questions that will lead them to new and sustained levels of profitability…..what to change?.....what to change to? and how to make the change happen?

One day in the 1990’s I received a phone call from a man that I had always considered to be my mentor. It was a simple phone call telling me he needed my help with a company in Kentucky. I never hesitated and accepted his offer, but I never asked him what the job was. Silly huh? I mean wouldn’t any normal human being offered a job ask that basic question? You see I had worked for this man in several other companies and there was complete trust in him. When I arrived at this company I found out that I was hired to be the General Manager of a plant that was on the verge of shutting down. You must understand that my background prior to this was all Quality and Engineering with zero experience in Operation’s Management. Talk about being lost…. But I reasoned to myself that I would just listen and learn from the two Operation’s Managers (OM’s) that were at the plant already. I soon found out that was not the answer because one of them had just been hired with his background being mostly job shop type environments. The other OM had been with the company for 25 years, so he may have been part of the problem.

To make a long story short, in less than 4 months we not only stopped the financial hemorrhaging, we became profitable! And the lessons I learned at this plant changed my approach to managing improvement forever. What changed me you may be wondering? I found a copy of Eli Goldratt’s masterpiece, The Goal. That night I stayed up all night and read it, soaking in all of the many lessons contained in it. I had never heard of the Theory of Constraints before and I became mesmerized by it. I went out and bought multiple copies of this book for my team and we even had a daily discussion session about the book. My team at this plant became very passionate about applying what they had learned and the improvements came rapidly and they were huge. What amazed me the most was our on-time delivery rates which were abysmal when I took over, but went to nearly 100% in very short order! I knew then that I had found the secret and it has stayed with me all these years.

So what was it about the Theory of Constraints (TOC) that changed my approach? Eli Goldratt, the genius author of The Goal and the inventor of TOC, believed that organizations could not maximize and sustain profitability until they maximized the throughput of their total system. Goldratt taught my team that the sum of all local optimizations does not translate into system optimization. This simple message was our driving force and in my opinion, the quintessential reason why so many Lean Six Sigma implementations have failed to deliver sustained bottom line results. Our lesson was simple…….improving isolated parts of the system does nothing to improve the total system output. It was a simple, but compelling message for us and was the single most important lesson we learned in those days.

So for me the real message behind the Theory of Constraint’s process of ongoing improvement is always focusing your efforts toward achieving system improvement rather than localized improvement. In order to achieve this focus, Goldratt developed the following five-step process of on-going improvement:

1. Identify the system’s constraint(s). What part of your system is limiting your ability to deliver more product or service?

2. Decide how to exploit the system’s constraint(s). What do you need to do to improve your system throughput?

3. Subordinate everything else to the above decision. Don’t ever out-pace or out-run your system constraint.

4. Elevate the system’s constraint(s). If after you’ve fully exploited your system constraint and your constraint capacity is still too low, you may need to spend some money to increase it.

5. If in the previous steps a constraint has been broken, go back to Step 1, but do not let allow inertia to cause a system constraint. Once the current constraint has been eliminated, a new one will take its place immediately, so be prepared to move your improvement resources to your new leverage point.

Theoretically, the implications of TOC to improvement initiatives can be profound. From a Throughput Accounting perspective, (which I’ve covered in past postings) reduction in inventory (one of the benefits of Lean) has a functional lower limit of zero, and once you’ve reached it, there is little, if anything, left to harvest. Lowering inventory can lead to substantial dollars, but it is a one-time occurrence. Operating expense reduction also has a functional lower limit and when you reach this lower limit, further attempts to reduce it can actually debilitate your organization, especially if you reduce OE through layoffs, which I might add, I never approve of.

Throughput in the TOC world is revenue minus totally variable costs (e.g. cost of raw materials, sales commissions, shipping costs, etc.). Just creating products to fill up storage racks is not throughput at all because no money has been received from the customer. Even though Throughput improvement probably has a practical upper limit, theoretically it does not and the secret to generating more of it is by recognizing the existence of a system constraint. The simple fact is, you cannot produce more throughput until you free up more productive capacity in your system constraint! And when the productive capacity of your system exceeds the number of customer orders, the market becomes the new constraint and your new area of focus. When this happens, things like faster lead times, superior on-time deliveries, world class quality and even price reductions can be used to generate more sales to utilize this freed-up constraint capacity. It is important to remember that if you have excess capacity, as long as your new product pricing covers your cost of raw materials and totally variable costs and you have not added excess labor to achieve this excess capacity, the net flows directly to the bottom line. Of course, all three of these actions (throughput increases, inventory reductions, and operating expense reduction) have a positive impact on net profit and return on investment. Think about this……if there were no constraints in your company, wouldn’t your profits be infinite?

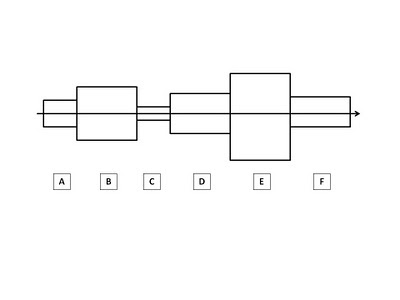

Just as a chain has a weakest link, there will always be a resource of some kind that limits the system from maximizing its output. It is my belief that in order to improve the system’s performance and sustain it, you must locate the weakest organizational link and leverage it by focusing your improvements there. It may not be obvious to you, but when you are looking for a starting point in any improvement initiative, it should always be the system constraint simply because it offers the greatest opportunity to increase profits in a relatively short period of time. Whether your constraint is a flow problem, a quality problem, a capacity problem, a policy problem or even the market, it should be identified as the area on which to focus your efforts. And to me, this is the other part of the answer to the question of why so many improvement initiatives have failed….they simply lack the right focus to leverage maximum profitability in the shortest amount of time.

I started out this posting by saying that there’s absolutely nothing wrong with Lean, Six Sigma or Lean Six Sigma and in fact at the plant in Kentucky we had no Black Belts or Lean experts. What we did have was our new-found knowledge of system improvement rather than localized improvement. At the end of the day, accepting the concept of system improvement is what really matters. TOC, Lean and Six Sigma are totally complementary and need each other to deliver maximum and sustained profitability. So if you want sustained profitability for your company, try the integration of the Theory of Constraints, Lean and Six Sigma. It is the focus of Bruce Nelson’s and my new book, Epiphanized: Integrating Theory of Constraints, Lean and Six Sigma.

Bob Sproull